-

Unlock New Revenue with VMware Cloud Foundation 9.0 at VMware Explore: A Must-Attend Session!

I’m thrilled to be presenting at VMware Explore again this year, and I can’t wait to connect with all of you at VMware Explore Las Vegas! It’s always an amazing opportunity to share insights and strategies with such an innovative community. I’m especially excited for my session, “Unlock New Revenue with VCF 9.0: Deliver AI,…

-

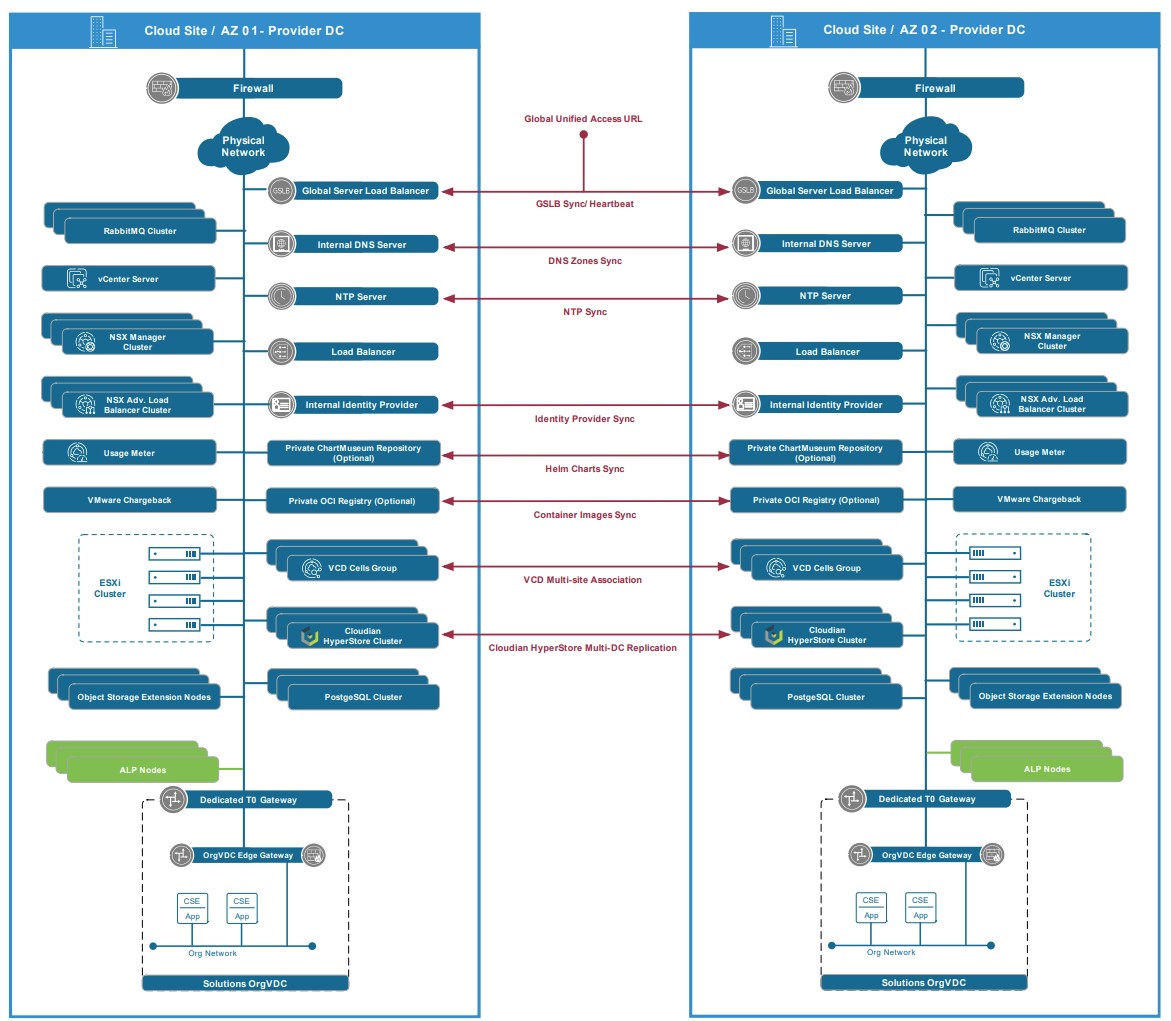

Architecting Kubernetes as-a-Service Offering with VMware Cloud Director White Paper

Delivering K8s as a Service had been a popular ask by many of our VMware Cloud Providers lately, where many has already started their journey with such offering, others are still looking where to start. We have been working closely with many of our providers to bring their K8s as a Service to market with…

-

VMware Tanzu Mission Control (TMC) is now officially GA for VMware Cloud Provider Partners

On June 4, 2021 VMware Tanzu Mission Control (TMC) has become available to our VCPP Cloud Provider. It is a quite exciting news to many of our Managed Service Providers that are trying to offer Kubernetes added services across Multi-Cloud. Tanzu Mission Control will help unlocks new opportunities for VMware cloud providers to offer Kubernetes…

-

VMware Cloud Director Cannot verify the Kubernetes API endpoint certificate on this Supervisor Cluster Error

While trying to Connect my VMware Cloud Director to my Tanzu Kubernetes Grid environment (TKGS) in vSphere 7 update 2, I kept hitting a certificate error. The error presented itself during the step to configure the Kubernetes policy for my Provider VDC. After following the wizard at: Resources ==> Cloud Resources ==> Provider VDCs ==>…

-

VMware Cloud Providers Feature Fridays

My colleague Guy Bartram had been leading over 30 VMware Cloud Providers focused sessions where he host a different expert on each. I was hosted previously on the Bitnami and App Launchpad Feature Friday previously. I highly recommend every VMware Cloud Provider to take a look at these sessions and subscribe to the VMware Feature…

-

Cloud Director Kubernetes as a Service with CSE 2.6.x Demo

If you have been following our VMware Cloud Provider Space for a while, you have probably been introduced to our Cloud Director Kubernetes as a Service offering based on VMware Container Service Extension. In the past, Container Service Extension used to be command line only, where a nice UI was introduced in CSE 2.6.0. Here…

-

VMware Container Service Extension 2.6.1 Installation step by step

One of the most requested feature with previous versions of the VMware Container Service Extension (CSE) is to add a native UI to it. As of CSE 2.6 we have added a native UI to CSE, which is adding to the friendliness of CSE and will make it much more appealing to many of our…

-

CSE 2.6.1 Error: Default template my_template with revision 0 not found. Unable to start CSE server.

While trying to run my Container Service Extension 2.6.1 after a successful installation. I kept getting the following error when trying to run CSE “Default template my_template with revision 0 not found. Unable to start CSE server.” To fix this you will need to: Edit your CSE config.yaml file to include the right name of…

-

vCloud Director Container Service Extension 2.6.x is here!

As more and more of our Cloud Providers are being asked to support providing K8s and Container services to their customers in a self-service and Multi-tenant fashion, we have released Container Service Extension over 30 months ago. The goal of Container Service Extension was to offer an Open Source plugin for vCloud Director that gives…

-

VMware Octant

As I have been discovering more with K8s, I have been growing to be a fan of VMware Octant. As defined by the open source project: A highly extensible platform for developers to better understand the complexity of Kubernetes clusters. Octant is a tool for developers to understand how applications run on a Kubernetes cluster.…

-

Kubernetes as a Service utilizing Nirmata and VMware vCloud Director

Over two years ago, VMware had released vCloud Director Container Service Extension (CSE). The idea back then was to allow service providers to spin Kubernetes Clusters for their customers at ease with a single command line. CSE has as well allowed our VMware Cloud Providers to offer Kubernetes Clusters as a self service, where Tenants…

-

How to force delete a PKS Cluster

There are times when you want to delete a PKS Cluster, but the deletion with the usual cPKS delete-cluster command fails. pks delete-cluster <PKS Cluster Name> This is usually due to issues with that cluster either it failed for some reason during deployment or it was alternate into a way that destabilize it. No worry,…